digital democracy|artificial intelligence

Harvesting or helping? Using AI to fill out your tax return forms

As tax season hits, you have options to file your tax return yourself or with help from someone else. But what if you let an AI chatbot step in to assist? It's a tempting choice — always available, always free, and ready to help when professional assistance is either too expensive or hard to find as deadlines loom. This study explores whether turning to AI chatbots is as smart a move as it sounds.

OpenAI's ChatGPT, Google's Gemini, and xAI's Grok have emerged as the frontrunners in the AI chatbot and tools sector. Recent data from Similarweb indicates that these platforms collectively account for nearly 84% of total traffic, making them the most likely choice for individuals seeking consultation, including tax-related advice. ChatGPT leads with 5.4B monthly visitors, followed by Gemini with 2.1B, and Grok with 0.3B.¹

Key insights

- Simulated conversations about tax returns on the most popular AI chatbots worldwide — ChatGPT, Gemini, and Grok — showed a clear pattern: users were actively pushed to provide personal information, starting from their job, income, or country, even with neutral prompts like “tax return”. ChatGPT was the most persistent, while Gemini and Grok were easier to navigate for those avoiding personal data input. For example, with Gemini, even when users were encouraged to provide personal information and chose not to, the AI chatbot smoothly continued the conversation, using example data if necessary. In contrast, ChatGPT made several attempts in a row to steer users toward providing their sensitive information.

- To illustrate AI chatbots' data collection behavior, consider an interaction with ChatGPT. Initially, this chatbot concludes its response with a request: “Just tell me your job and approximate yearly income, and I can estimate your refund.” If the user chooses to ignore this request, ChatGPT persists in its next response, asking the user to share the requested details and even seeking more data. If the user proceeds to ignore such requests, ChatGPT adopts a more assertive tone, using phrases like “Please reply with these” and “You can answer like this example.” Ultimately, if the user prompts with “no,” the chatbot ceases to offer estimates. In the case of Gemini, if a user responds with “no,” the chatbot replies with a message: “No worries at all! Since you'd rather not share your specific numbers, I've put together a ‘cheat sheet’ for the current 2025–26 financial year (ending June 30, 2026). You can use this to do the math yourself.”

- AI chatbots can gather user information beyond what is explicitly provided in user prompts. For instance, in a simulated interaction using a VPN connected to an Australian server, ChatGPT tailored its responses based on the user's location data. It started with phrases such as “If you're in Australia” and offered tax-related details specific to that country. In contrast, Gemini not only provided information relevant to Australia but also included details for the US and UK. This broader coverage makes its data collection practices less obvious and potentially less suspicious for users who aren't familiar with the Terms of Service and Privacy Policy. Grok, on the other hand, focused on delivering responses related to US tax returns and offered to customize information further if users provided additional details about their circumstances — such as their country, income type, or specific questions.

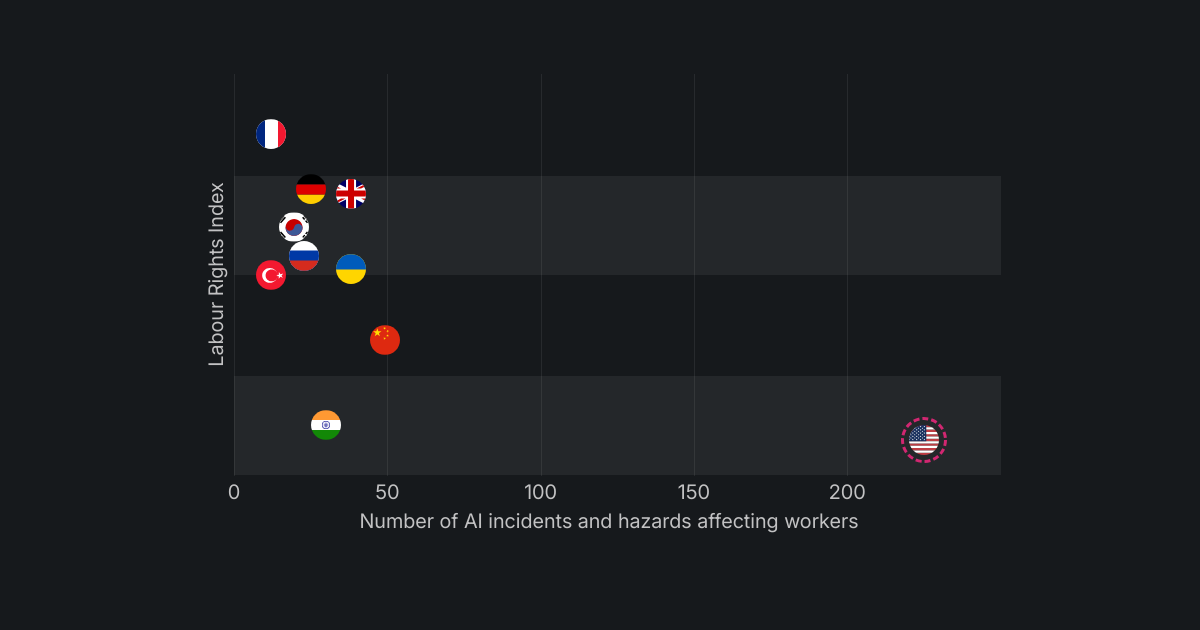

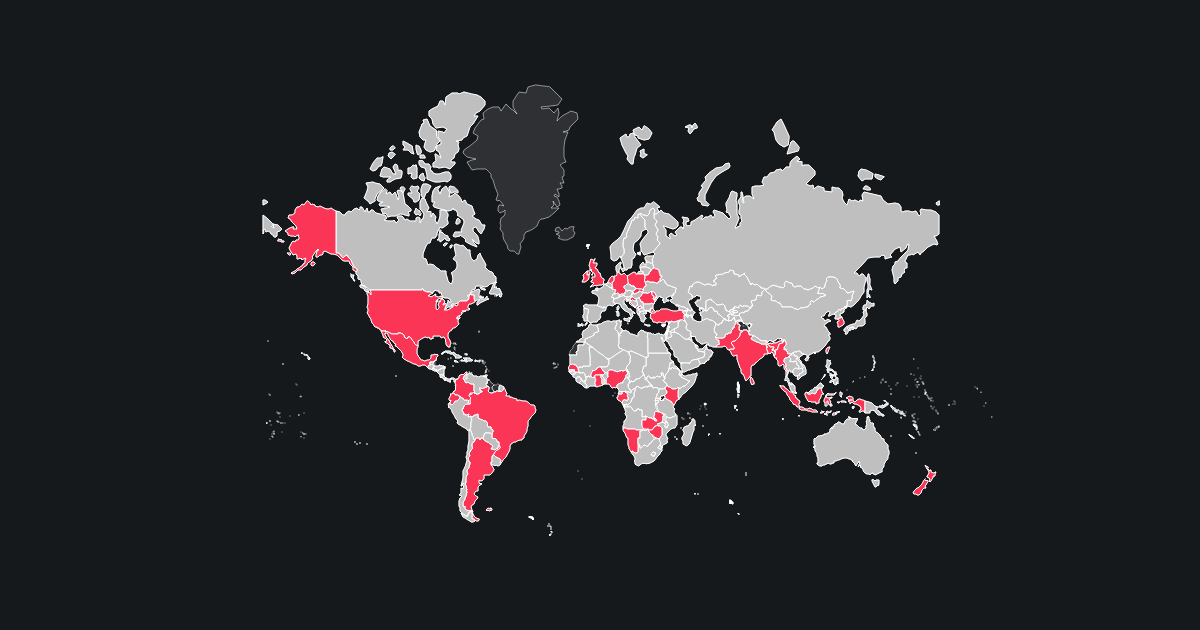

- This example aligns with findings from a study Surfshark conducted last year, which examined the data collection practices of the top AI chatbots available on the Apple App Store.² The study revealed how data-hungry some of these chatbots can be, with certain apps collecting up to 32 out of 35 possible data types. Location data is just one example of the extensive information these chatbots may gather, highlighting the importance of understanding their data collection practices.

- However, using Grok can be frustrating because it frequently prompts users to sign up, after which companies can gain insights into users' habits and interests or target them with ads, as ChatGPT has already announced plans to do.³ During simulated conversations, interactions were often interrupted with a “high demand” note, forcing users to either wait or sign up for higher priority access. Additionally, after the fifth prompt, a message limit was reached, preventing further chat progression. Similarly, ChatGPT frequently asked users to create an account to unlock features such as uploading files or images or accessing enhanced capabilities. In contrast, Gemini's approach was the least aggressive, suggesting that users create an account only after they had been prompted at least 10 times.

- The main website page for Gemini explicitly states that the AI chatbot can make mistakes. ChatGPT provides a similar disclaimer after the first prompt, additionally warning users not to share sensitive information and noting that chats may be reviewed and used to train their models. In contrast, Grok does not visibly display such a statement on the chat screen, although it is included in the Terms of Service.⁴ For these reasons, transparency about sources and access to links are crucial for assessing the accuracy of AI chatbot information, particularly in sensitive areas like tax returns.

- A highly concerning finding is that Gemini does not provide any source references, raising issues about the verifiability of its information. Meanwhile, ChatGPT takes an inconsistent approach, offering links only for certain highlighted words, with explanatory text in a sidebar. In contrast, Grok enhances transparency by providing an extensive list of sources with direct links to content. However, it is important to note that merely providing a link does not ensure that the information was correctly interpreted or used by the AI, leaving users to navigate these technologies at their own risk.

Methodology and sources

The study aims to provide insights into the chatbots' behavior and the risks associated with their use in sensitive contexts, such as tax return assistance. To simulate user behavior, three distinct starting prompts were used: a neutral “tax return”, a more engaging “help me with my tax return,” and a third prompt, “how can you help me with my tax return?” Following the initial prompt, subsequent user interactions were limited to “yes” if the chatbot suggested an action, or “no” if it requested personal information. If the interaction stalled, the AI chatbot’s first suggestion was used to continue the conversation. Each initial prompt was entered into a new chat thread using Google Chrome's Incognito mode, with a VPN connected to an Australian server. All interactions were conducted in English. Data was collected on March 12, 2026.

Among the top five AI chatbots and tools with the highest user traffic¹, OpenAI's ChatGPT, Google's Gemini, and xAI's Grok were selected for analysis because their accessible free versions do not require users to sign in. As a result, Anthropic Claude and DeepSeek were excluded from the analysis due to their requirement for account creation before use. No additional settings were adjusted after accessing the AI chatbot websites.

Note: The same prompts do not always produce identical results, so the first recorded take was used for analysis.

For the complete research material behind this study, visit here.