digital democracy|dystopian new tech

AI training on social media: can you really say no?

If you've ever shared something on social media, your content likely helped train an AI model. While most popular social media platforms offer global connections, they also serve as incredibly vast data resources for their creators to be used for AI development. But what if you want your data to remain private and don’t want your personal posts, comments, or pictures to be used for artificial intelligence training? Turns out — opting out is not a straightforward process.

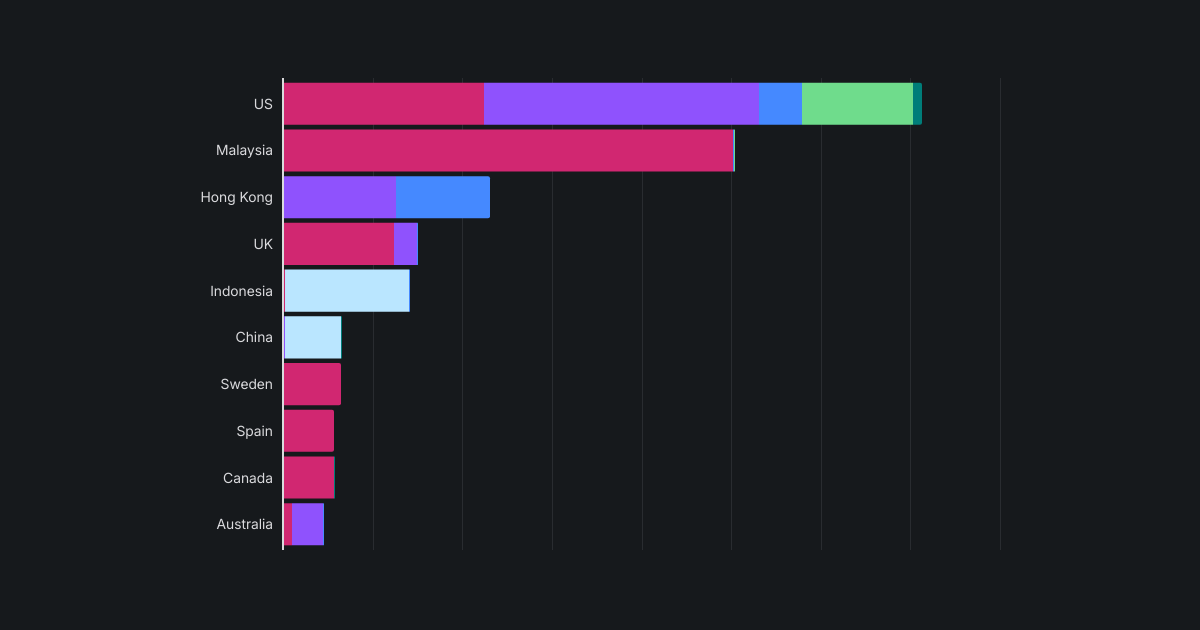

Our Research Team examined opt-out procedures across the top 10 major social media platforms and found that protecting your data isn't as simple as pushing a single button. Most of these platforms require users to navigate complex processes, making it incredibly difficult for individuals to opt out of AI training.

Key insights

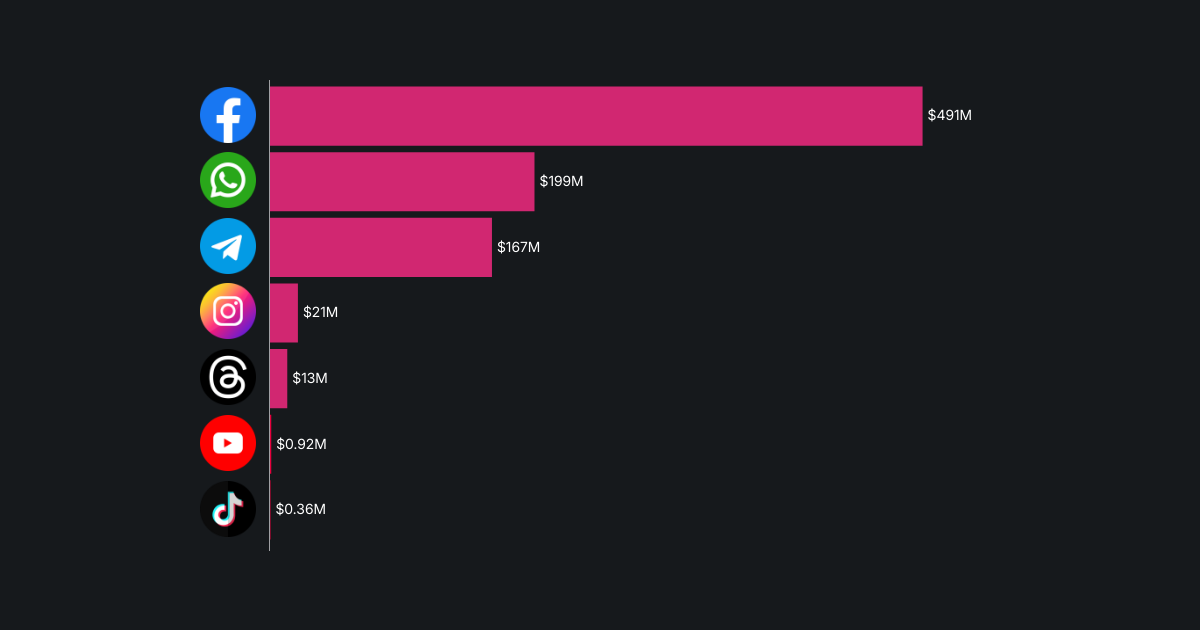

- Facebook, Instagram, and TikTok are the most popular social media platforms globally, and they are all trying to train their AI models with as much user data as possible. Opting out of AI training on these platforms is not only complex but often restricted by region. Instead of providing a simple toggle-off button, these three social media platforms direct users to dense privacy policy pages and require them to submit a request form, often leaving users to cite their country's data protection laws. TikTok obscures the process the most, requiring 19 actions to request that your data not be used for AI training.

- Facebook's and Instagram’s processes for publicly posted user data are shorter, requiring 8 actions. However, they still involve navigating specific privacy pages, which lacks the clarity of a toggle button found on other platforms. While these forms are available for all countries to submit, Facebook, Instagram, and TikTok do not necessarily have to comply with them if a user's country lacks relevant laws. In general, EU, EEA, and UK citizens have the best protection under GDPR. Other countries, such as the US, don’t have such safeguards, and publicly posted user data is fair game for training AI models without a clear possibility to opt out.

- In contrast, Snapchat, LinkedIn, X (formerly Twitter), and Pinterest offer a simpler process: taking three to five actions to reach privacy settings in their mobile apps and toggle off AI training consent. However, the default setting across these platforms is ‘’consent on,’’ meaning users must proactively turn it off. If you haven’t disabled this setting, it’s likely your data has already been used to train AI models, and this cannot be undone — opting out only prevents future use.

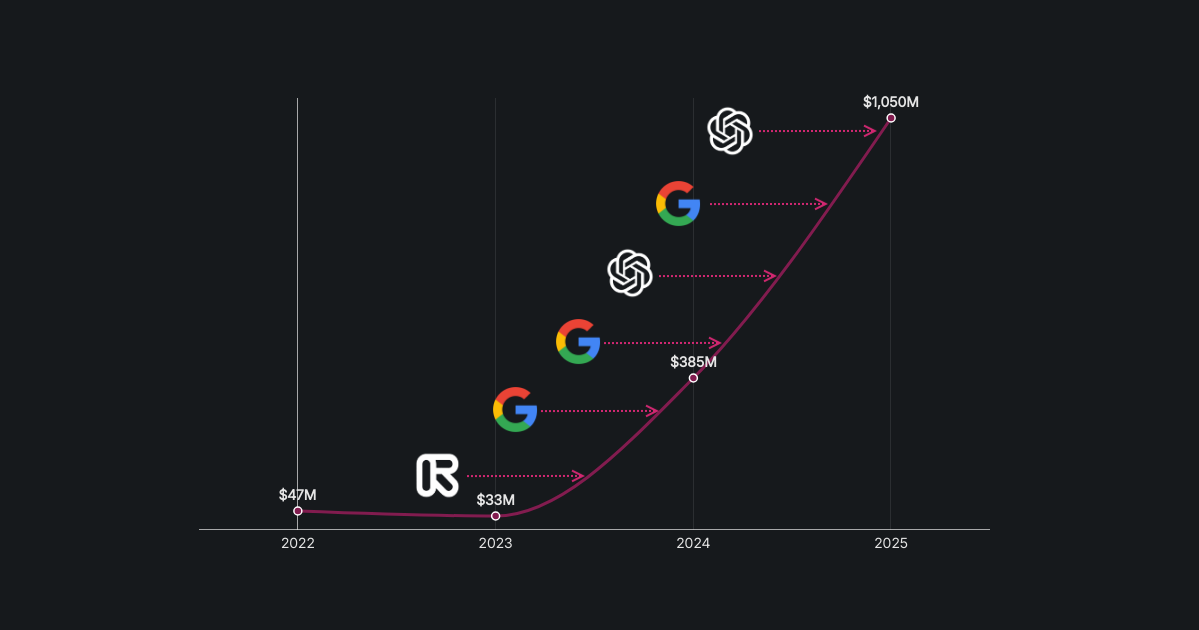

- Reddit stands out by offering no option for users to opt out of AI model training. All users’ forum discussions are available for global AI model development. In 2024, Reddit signed contracts with Google and OpenAI, reportedly worth $203 million¹, granting them access to vast amounts of user-generated discussions for training their AI models. While Reddit prohibits unauthorized scraping, it willingly licenses its data to selected partners under formal agreements, yet provides users with no clear way to opt out.

- By default, social media platforms such as Facebook, Instagram, X (formerly Twitter), and Snapchat train AI models on public user data, including profiles, posts, and public interactions. Meanwhile, platforms like TikTok, Pinterest, and LinkedIn take a more aggressive approach, reserving the right to use both public and private data for AI model development. TikTok stands out in this group, as it reportedly analyzes not only public videos but also private videos and even unsaved drafts for AI training.

- Overall, social media platforms strongly prefer to collect as much user data as possible to train their AI models, with 8 out of 10 analyzed platforms doing so by default. Of the remaining two, Kwai focuses on markets in China and Brazil and lacks transparency about its AI training practices. Meanwhile, Discord is the only major platform to explicitly state that it does not use user data for AI training.

Methodology and sources

This study analyzed the 10 most popular social media platforms, ranked by Cloudflare's data² on web traffic and user engagement. The focus was to determine whether users can opt out of AI training, what the default settings are, and whether opt-out options are available across regions. We downloaded the mobile applications for all platforms, with the exception of Kwai, which was not available in our region for analysis. For each accessible app, we assessed the default settings for AI training consent. Additionally, we attempted to opt out of the AI training process and quantified the difficulty by counting the number of actions required. An "action" was defined as a click, entering personal information, or toggling off consent buttons.

For Discord, Reddit, and Kwai, opt-out options were not readily identifiable within the apps, so we conducted a review of their official privacy policy pages to understand the companies' stated positions on AI model training. In the case of Reddit, we also noted publicly available information regarding partnerships with AI companies for data usage.

For the complete research material behind this study, click here.